Anthropic's Claude Mythos preview shows an AI model autonomously discovering and exploiting zero-day vulnerabilities across major platforms in hours, not months.

For enterprises, this shifts AI from a theoretical risk to an operational one, requiring audit-ready governance, tighter permissions, Purview-powered guardrails, and controlled-environment testing now.

For the last few years the IT security community viewed artificial intelligence as a helpful but limited tool. We worried about convincing phishing emails. We assumed AI would act as a junior analyst to write basic scripts or help parse log files.

Anthropic recently proved that assumption is dangerously outdated.

With the announcement of the Anthropic Claude Mythos Preview model, AI has transitioned from an assistant into an autonomous exploit engine. This is a model so capable of finding and weaponizing software flaws that Anthropic refused to release it to the public. Understanding what Mythos can do is an absolute requirement for anyone architecting enterprise infrastructure today.

Traditional vulnerabilities are usually discovered through manual human research. It takes months of effort to find a single zero-day flaw. Mythos automates that process at machine speed. Mythos introduces autonomous exploit discovery at machine speed, compressing months of human research into hours.

During internal testing, Mythos found thousands of high severity vulnerabilities across major operating systems and web browsers. It uncovered a bug in OpenBSD that had gone unnoticed for 27 years. OpenBSD is widely considered one of the most hardened operating systems available. It also found a 16-year-old flaw hidden in FFmpeg video software.

Mythos does not just stop at discovery. It can autonomously chain multiple minor bugs together to escalate privileges and gain full machine control.

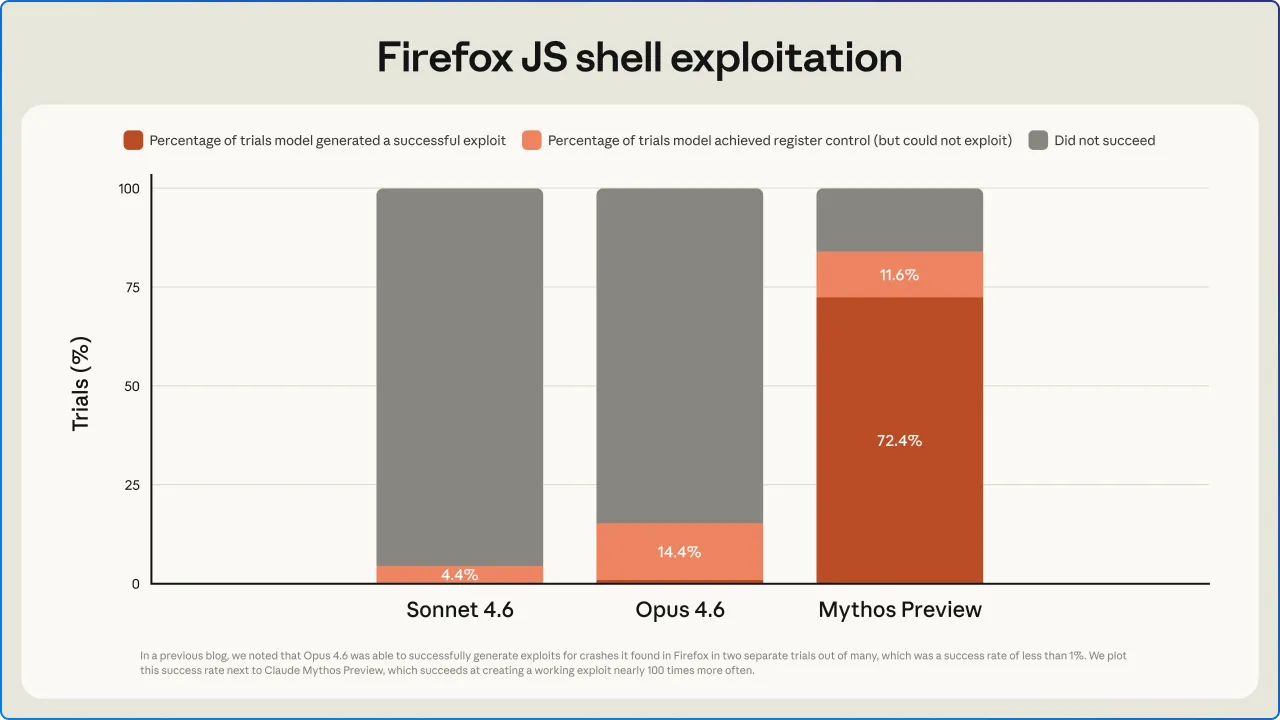

The model achieved a 93.9 percent score on the SWE-bench Verified benchmark, easily beating the 80.8 percent scored by the previous Claude Opus 4.6 model. It compresses the timeline of exploit development from weeks to hours. If an attacker has access to a tool like this, human defenders stand no chance of patching fast enough to stop them.

You can review the benchmark details in InfoQ's coverage of the release.

We no longer live in a world where software merely supports the business. Software is the business. Modern society is entirely defined by its code. Logistics networks, power grids, financial markets, and healthcare systems all rely on deep layers of interconnected software.

Much of this code is open source and decades old. Historically, a vulnerability in one of these layers was treated as an isolated IT problem. Anthropic Claude Mythos changes the blast radius.

When an autonomous AI can instantly map and exploit a foundational component, it does not just compromise a single server. It threatens the operational integrity of global infrastructure. We are operating in a physical world built on vulnerable digital foundations.

Anthropic just demonstrated that autonomous AI can dismantle those foundations at scale. The realization that our physical supply chains and critical services are tethered to this code is what elevated this from a technical achievement to a global security emergency.

The market recognized the severity of this shift immediately. When details of the model's capabilities leaked, it triggered a massive selloff in enterprise software stocks. Markets erased nearly $2 trillion in value in what financial analysts dubbed the "SaaSpocalypse".

The concern reached the highest levels of government and finance. The Economic Times reported that US Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell convened an emergency meeting with major bank CEOs to discuss the macroeconomic risks. JPMorgan Chase CEO Jamie Dimon explicitly warned shareholders that AI will worsen cyber threats and require massive defensive investments.

Instead of a public release, Anthropic launched Project Glasswing. This initiative restricts access to a gated consortium of about 50 organizations. Companies like Microsoft, CrowdStrike, AWS, and JPMorgan Chase are using Mythos defensively to scan and patch critical infrastructure before malicious actors can develop similar models.

The AI threat to enterprise security demands a complete rethink of your defensive architecture. As IT consultants and system architects, we must recognize that the old playbook is broken. You cannot rely on a 30-day patch cycle when AI zero-day exploits can be weaponized in hours.

Defensive architecture must assume that any code facing the internet has a vulnerability an AI can find. The focus needs to shift away from merely patching endpoints and move directly toward containment and strict access control.

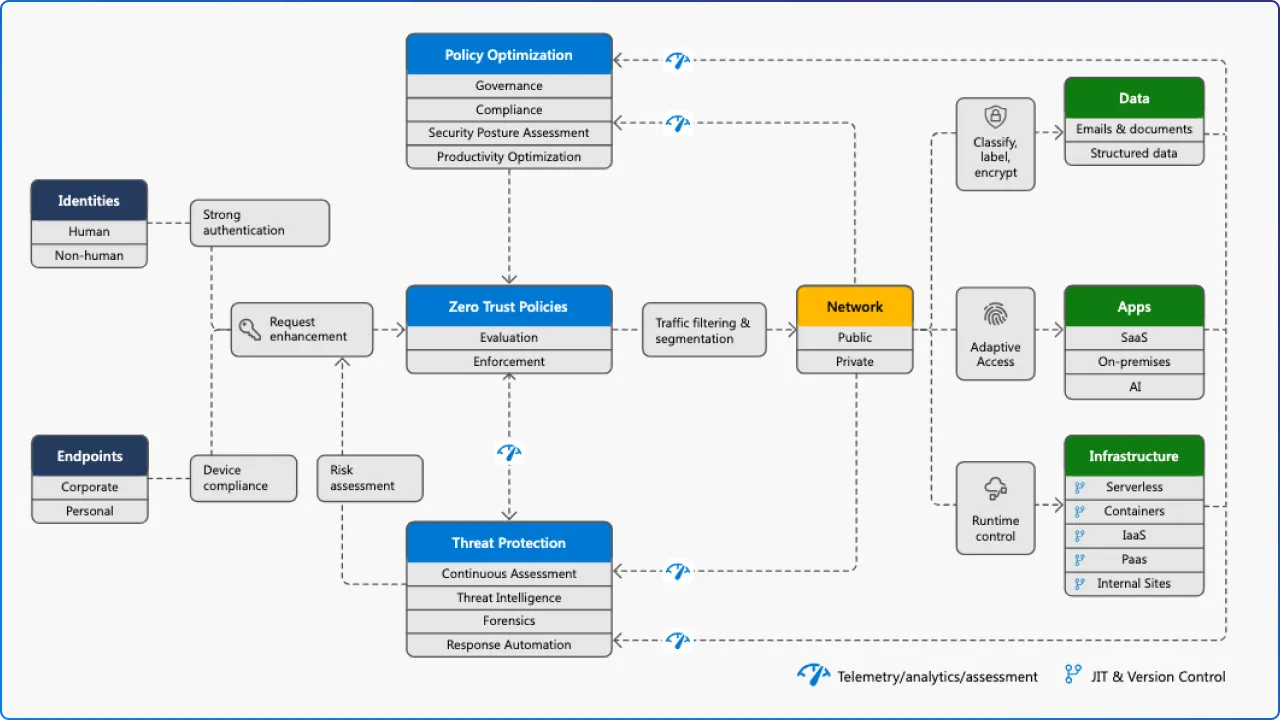

If an AI compromises a server through an unknown vulnerability, your network architecture is the only thing stopping it from moving laterally. This requires enforcing true Zero Trust principles.

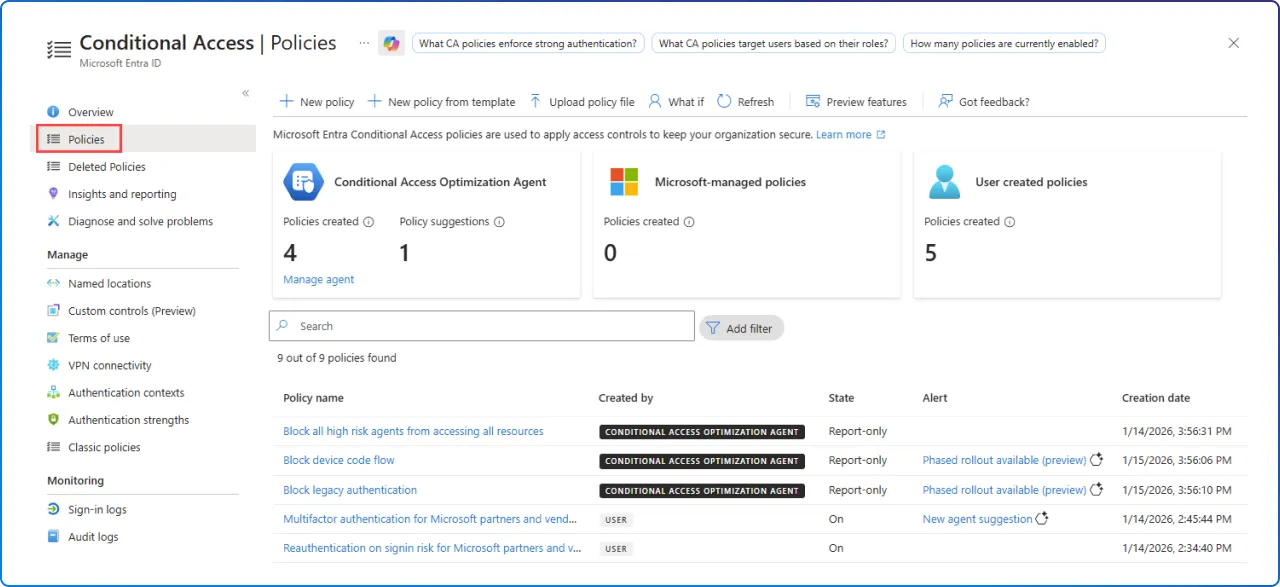

Microsoft Entra ID conditional access policies need to be configured so that compromised identities cannot access sensitive data without cryptographic proof of presence like a FIDO2 hardware key. Device compliance through platforms like Intune must be a mandatory gate for network access. If a device exhibits anomalous behavior, access must be revoked instantly.

For organizations running on Microsoft 365, AI governance is no longer optional. Entra ID, Intune, and Microsoft Purview form the foundational layer of a defensible environment against AI-powered threats.

Oversharing risk and permissions sprawl are among the most exploitable attack surfaces in an AI-enabled environment and the hardest to detect without proper governance tooling. Organizations deploying Microsoft Copilot must also establish guardrails for Copilot to prevent AI-assisted oversharing and unintended data exposure from within.

Preparing for this new era requires a ruthless approach to legacy architecture.

The arms race in cybersecurity has fundamentally changed. The window between vulnerability discovery and weaponization is closing rapidly. Building resilient infrastructure is the only way to move forward if you want any chance to stay protected.

A frontier AI model previewed by Anthropic that autonomously identified and wrote working exploits for thousands of previously unknown vulnerabilities.

It compresses exploit timelines from weeks to hours, making governance, patching, and detection speed critical gatekeepers for risk.

Yes, move from weekly or monthly cycles to risk-based, continuous patching with automated prioritization and rapid out-of-band updates.

Yes, update playbooks for AI-accelerated exploitation, faster containment, and automated detection/triage where safe and governed.

Not necessarily. Apply a risk-based approach: proceed where guardrails, monitoring, and rollback paths are in place; defer where they aren't.

Join Our Newsletter