As organizations move from isolated AI experiments into broader deployment, governance gets harder fast. What begins as a few pilot use cases can quickly expand into a mix of Microsoft 365 Copilot, Copilot Studio agents, connectors, workflows, and department-led requests. Microsoft’s current Copilot Control System guidance reflects that reality by framing enterprise readiness around three pillars: security and governance, management controls, and measurement and reporting. Microsoft also identifies agent lifecycle as a core management capability inside that model.

That is where an Agent PMO comes in. An Agent PMO is not just a committee or a review board. It is the operating layer that helps make Copilot governance and AI agent governance practical across the business. It gives organizations a repeatable way to prioritize requests, define ownership, manage agent lifecycle decisions, coordinate reviews, and measure value over time. Without that structure, teams often end up with fragmented decisions, inconsistent controls, and very little clarity around who owns what.

In other words, an Agent PMO is what turns a Microsoft Copilot governance framework into a working agent operating model. It helps create a scalable governance operating model for AI that supports innovation without leaving governance behind. That is increasingly important as Microsoft continues expanding agent creation, deployment, monitoring, and analytics capabilities across Copilot Studio and Microsoft 365.

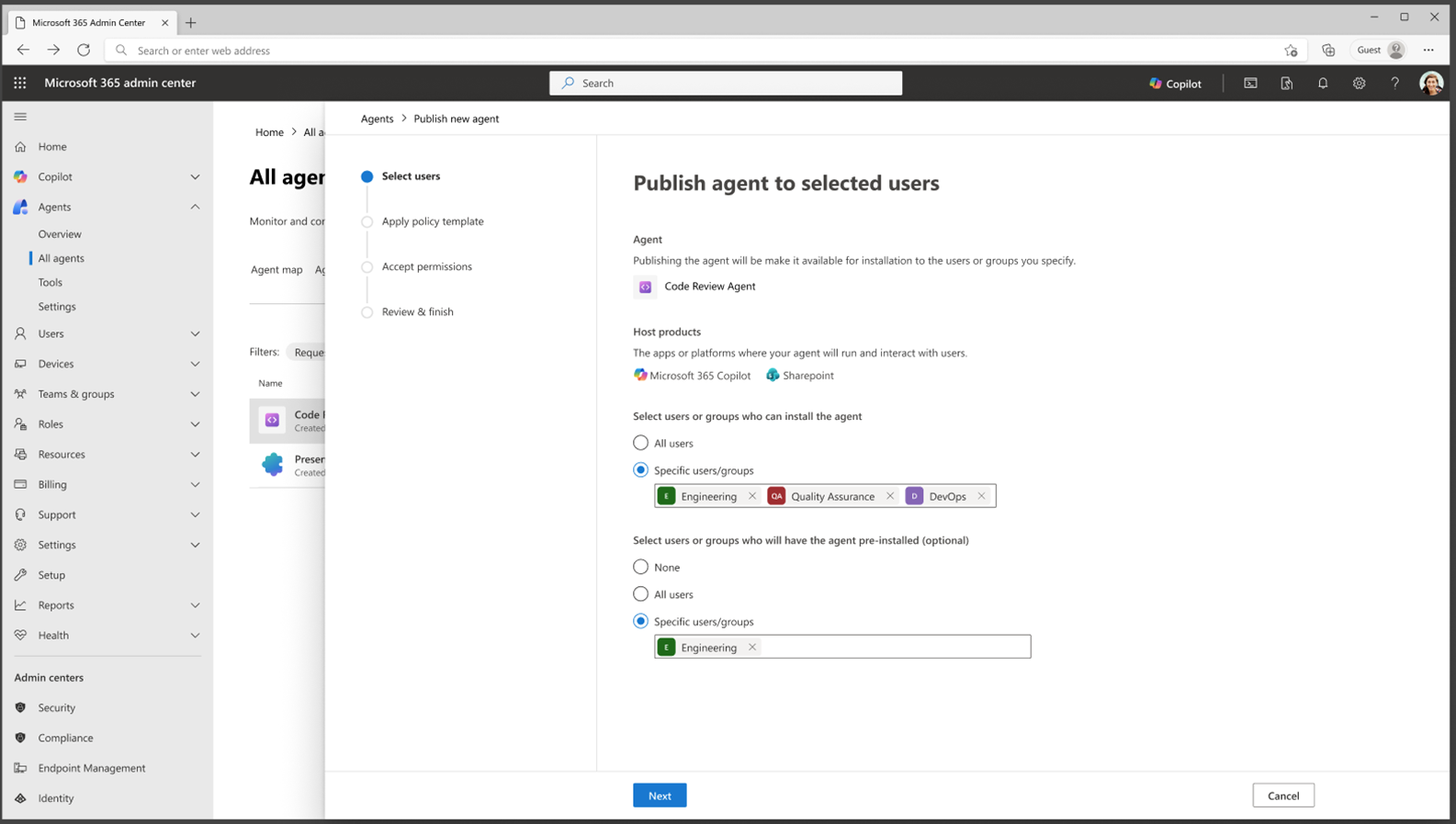

An Agent PMO is best understood as a coordination layer for governance for AI agents. It does not necessarily build every agent itself, and it does not replace IT, security, or business ownership. Instead, it creates structure around how agent ideas are evaluated, how risk is reviewed, how deployment decisions are made, and how outcomes are measured. Microsoft describes Copilot Studio as a low-code tool for building agents and agent flows, which means more teams can participate in building agent experiences. That flexibility increases the need for a central model that keeps governance consistent.

A practical Agent PMO usually owns or coordinates a few core areas: intake, prioritization, standards, review workflows, lifecycle oversight, and reporting. That is why the term AI PMO model fits here too. The PMO is not just there to slow things down. It is there to make sure the organization can move faster with more clarity, better controls, and stronger accountability.

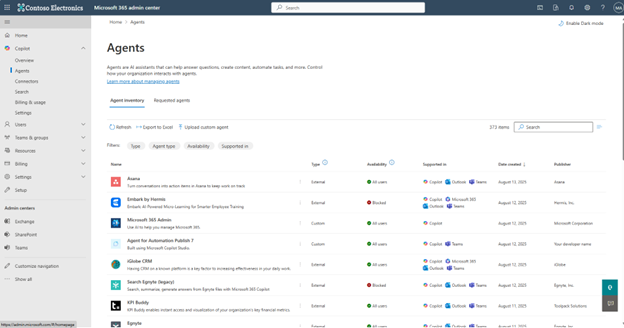

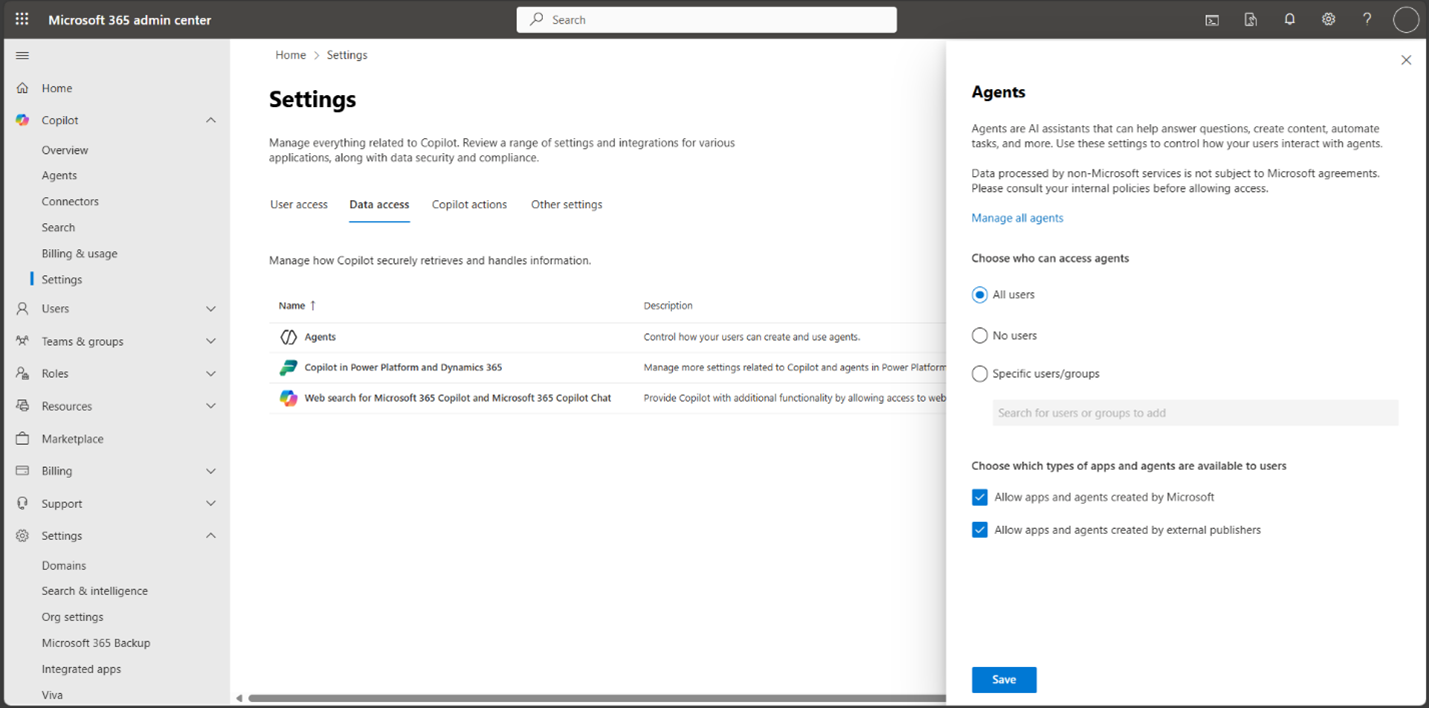

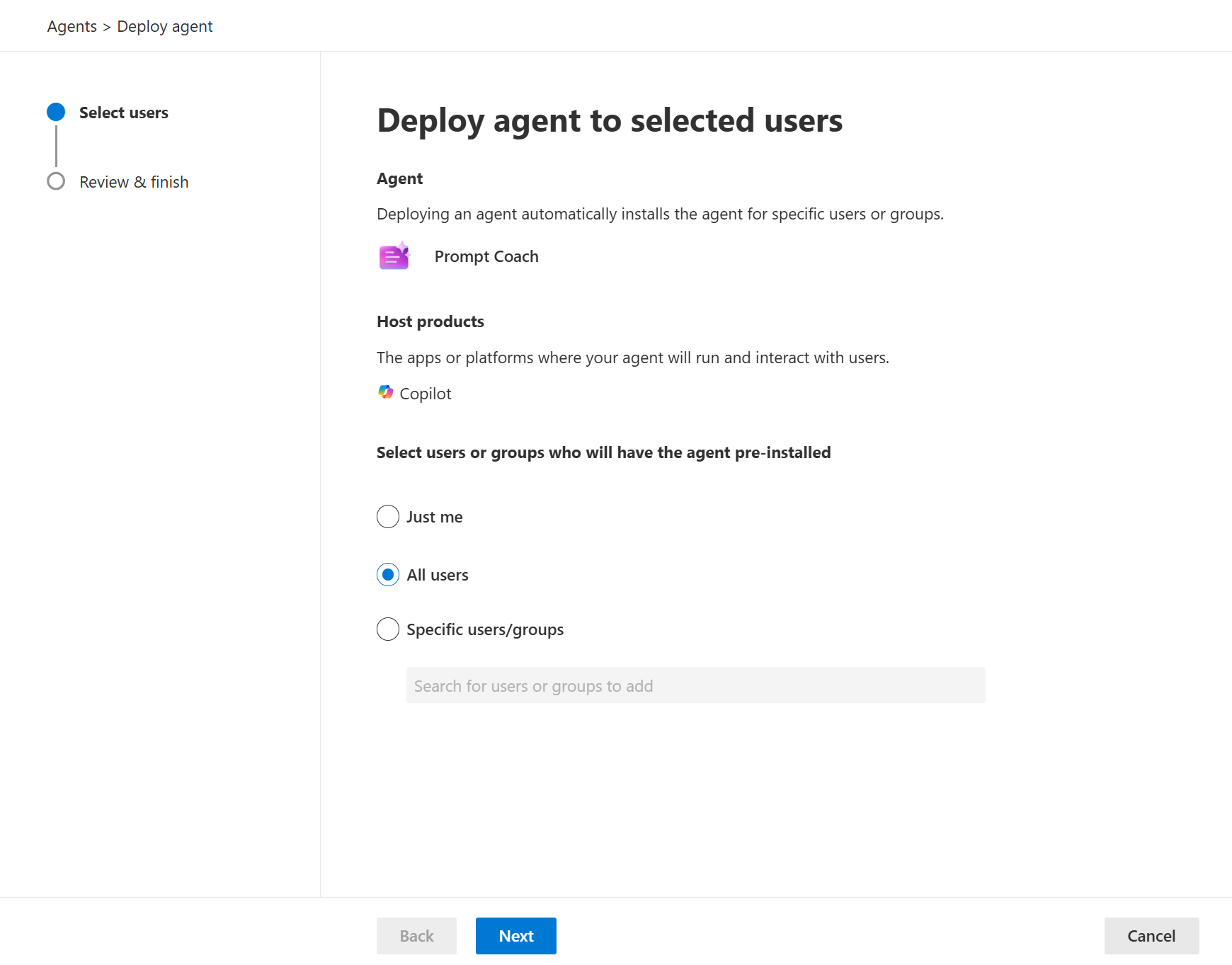

Many organizations begin Copilot governance by focusing on settings, permissions, and policies. Those are important, but Microsoft’s Copilot Control System makes clear that governance also depends on management controls and measurement, not just security. The management controls pillar specifically calls out licensing and metering, agent lifecycle, and customization. That means organizations need a way to manage how agents are requested, approved, deployed, changed, and retired.

This is exactly where an Agent PMO adds value. It connects policy to execution. It makes sure governance is not just a document or a list of admin settings. It creates a practical agent governance model that can support Copilot risk and compliance, Copilot access management, and decision-making at scale. Without that kind of operating structure, governance often becomes reactive, and teams only start asking ownership or risk questions after the agent is already live.

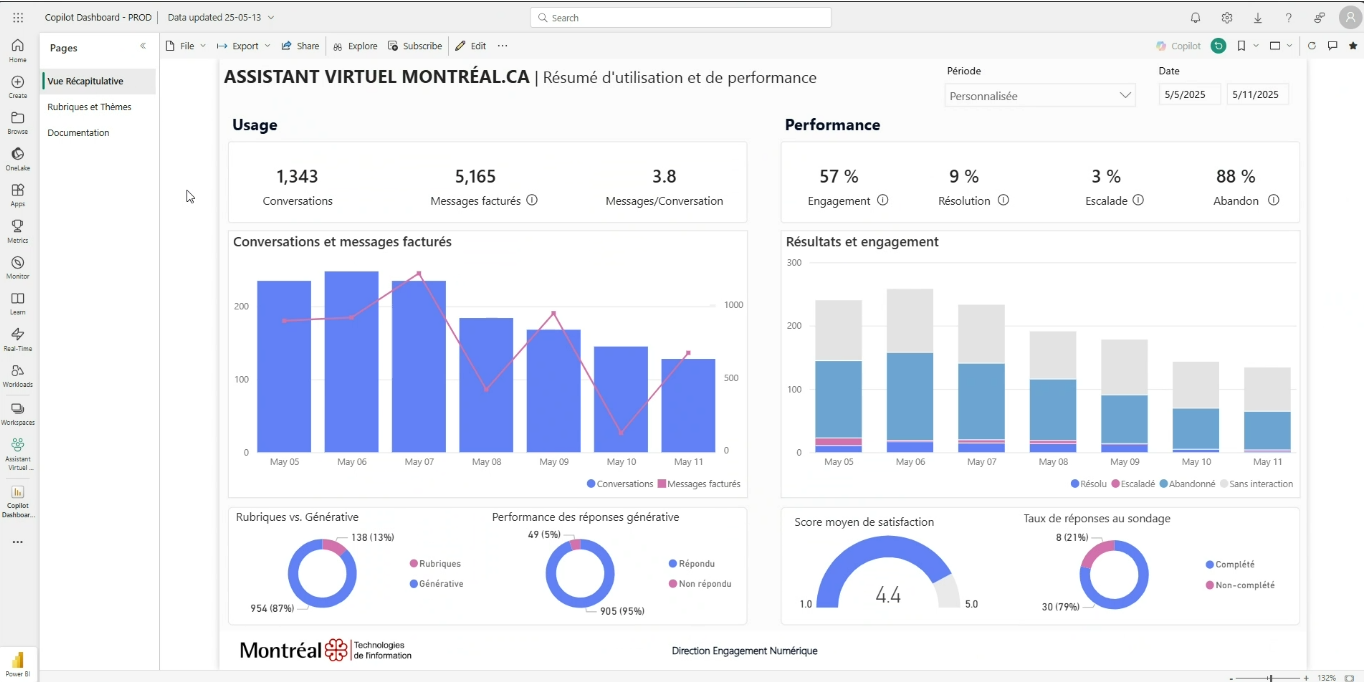

A strong Agent PMO usually owns or coordinates the workflows that make governance repeatable. That includes intake and prioritization, AI risk reviews, ownership assignment, release and agent lifecycle management, monitoring, and AI success metrics. These are the workflows that keep the environment organized as more use cases move from pilot into production. Microsoft’s current Copilot Studio analytics guidance is built around helping teams understand performance, outcomes, and effectiveness over time, which fits directly with a PMO model that treats optimization as part of governance.

This is also where a lightweight governance framework matters. The goal is not to overcomplicate every request. The goal is to make sure there is a consistent front door, a review path, a clear owner, and a way to know whether an agent is creating value. That is what supports governance without slowing innovation.

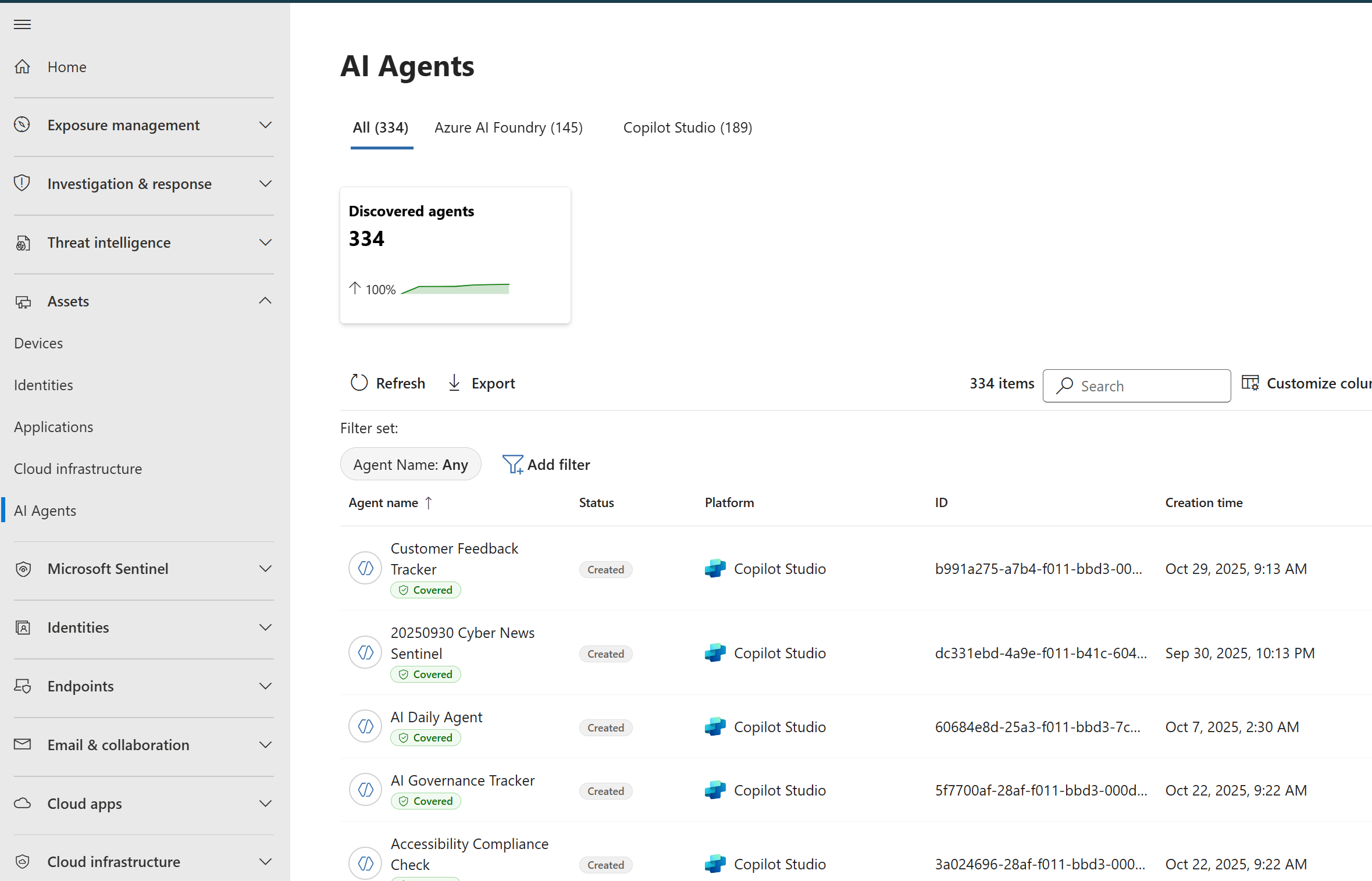

One of the most important jobs of an Agent PMO is making fusion team governance real. AI agents cut across security, IT, operations, and business teams. That means governance cannot stay inside one silo. Someone needs to define who reviews access, who assesses sensitive use cases, who signs off on rollout, and who remains accountable after deployment. Microsoft’s activity review guidance for Copilot Studio shows that agent behavior can be inspected through transcripts, trigger payloads, responses, and activity maps, which reinforces that governance needs real operational visibility, not just pre-launch approvals.

That is why the PMO often helps formalize agent access control, Copilot access management, and AI risk reviews. It ensures the organization has a repeatable process for deciding what an agent can access, what it can do, and what level of review is appropriate before broader release. Without that structure, teams may still be building agents, but they are doing so without a mature governance model.

An Agent PMO also matters because governance does not end at launch. Microsoft’s Copilot Control System measurement and reporting guidance emphasizes adoption, productivity impact, business value, ROI, and operational reporting, including reporting on agents and deployments. That makes it clear that scaling Copilot and agents responsibly requires ongoing measurement, not just initial approval.

In practice, that means the PMO helps support cross-environment tracking, lifecycle decisions, performance reporting, and broader AI governance at scale. It helps answer questions like: Which agents are active? Who owns them? Are they still aligned to business value? Which ones need improvement, rollback, or retirement? Those are the kinds of questions that separate an actual agent operating model from ad hoc experimentation.

The most important thing to understand is that an Agent PMO is not just a group of people meeting once in a while. It is a way of operating. It is the structure that connects intake, approvals, standards, lifecycle decisions, measurement, and optimization into one repeatable system. That is why it is best thought of as both an Agent PMO and an AI PMO model. It gives the organization a clear way to govern Copilot and agents without relying on scattered decisions or informal ownership.

For organizations serious about Copilot governance and AI agent governance, the real question is not whether they need governance. The real question is whether they have an operating model that can support it. In most cases, that is exactly what the Agent PMO is there to provide.

Our Agent PMO event explored what it takes to turn governance into a working model for Copilot and AI agents, including practical guidance around lifecycle, controls, and measurement.

Join Our Newsletter