As organizations move from experimentation into broader deployment, governance becomes less about having a policy and more about having repeatable workflows. That is especially true for Copilot governance and AI agent governance, where the volume of requests, pace of change, and the mix of business and technical stakeholders can quickly overwhelm ad hoc decision-making.

That is where an Agent PMO becomes valuable. A strong agent operating model does not just define principles. It standardizes the workflows that turn governance into something people can actually follow. Without those workflows, organizations often end up with duplicate requests, inconsistent reviews, unclear ownership, weak Copilot risk and compliance, and limited visibility into which agents are actually creating value.

If the earlier question was why governance fails without an operating model, the next question is what that model should actually standardize. In practice, most teams need five workflows: intake and prioritization, risk and access review, lifecycle and release management, monitoring and issue response, and value measurement. Together, these create a practical governance operating model for AI that supports AI governance at scale without creating unnecessary friction.

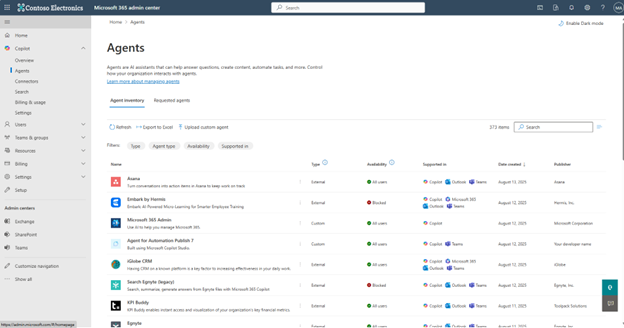

The first workflow every Agent PMO should standardize is intake. Once teams across the business start requesting copilots, custom agents, and agent-driven automations, informal approvals stop working.

A standardized intake workflow should capture the business problem, expected value, systems touched, data sensitivity, target users, required approvals, and likely complexity.

This becomes the start of an AI governance workflow and helps the organization compare requests more consistently. It also reduces duplication and gives leaders a way to prioritize based on risk and business value instead of whoever asked first.

This is where a practical AI PMO model starts to show up. The goal is not to bury teams in forms. The goal is to create a lightweight governance framework that helps the business decide what should move forward, what needs more review, and what should be redirected to an existing solution.

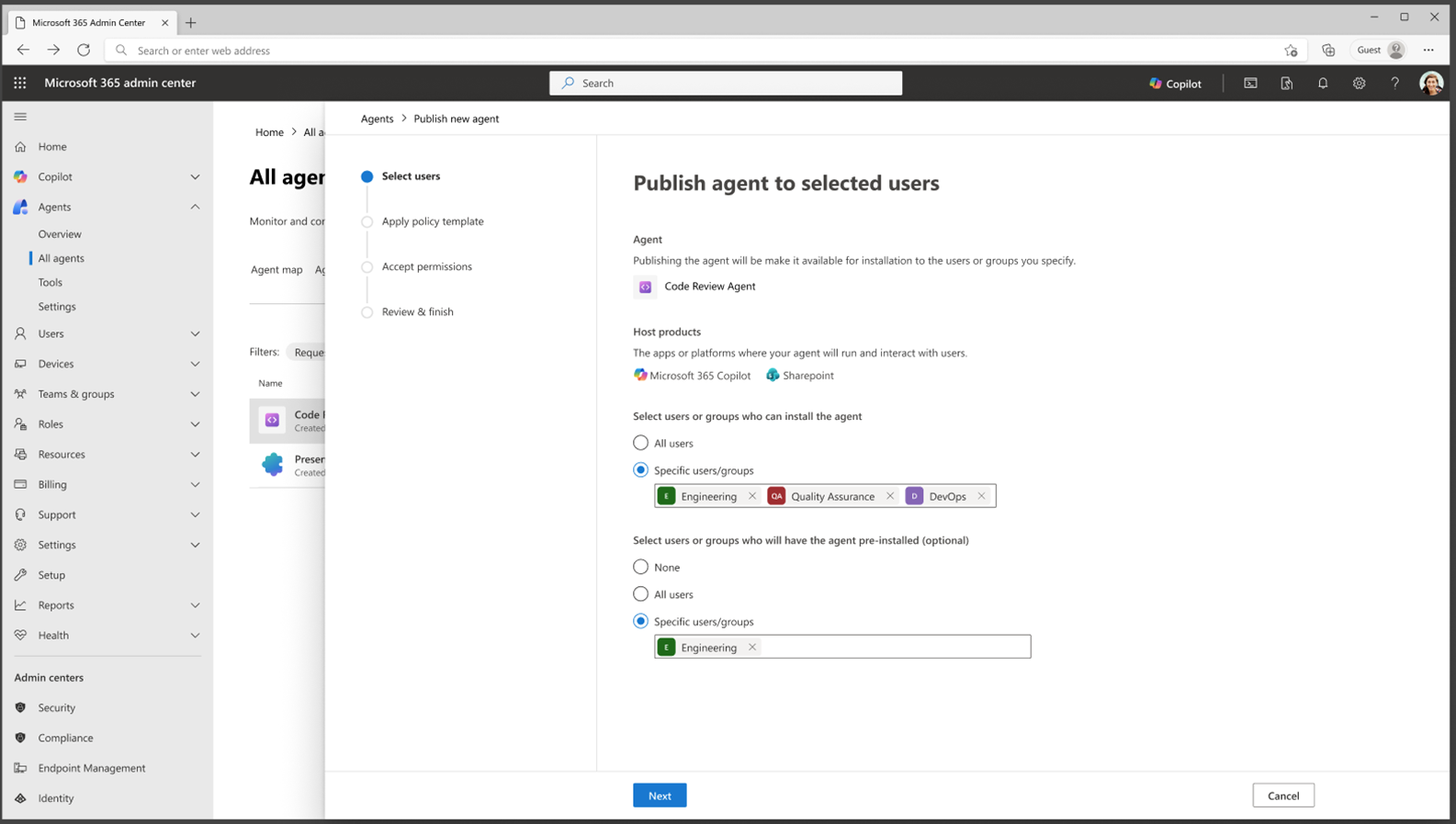

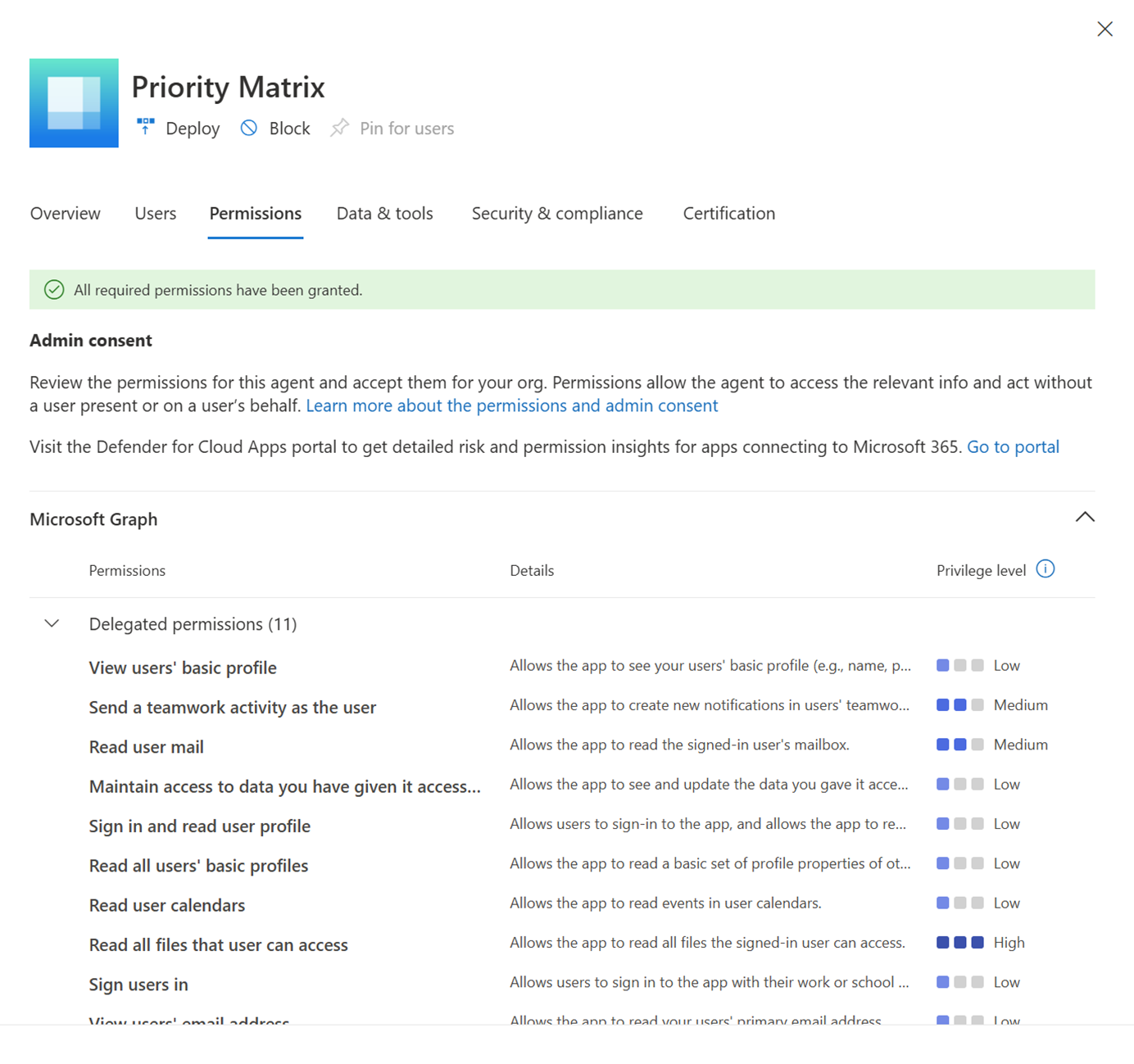

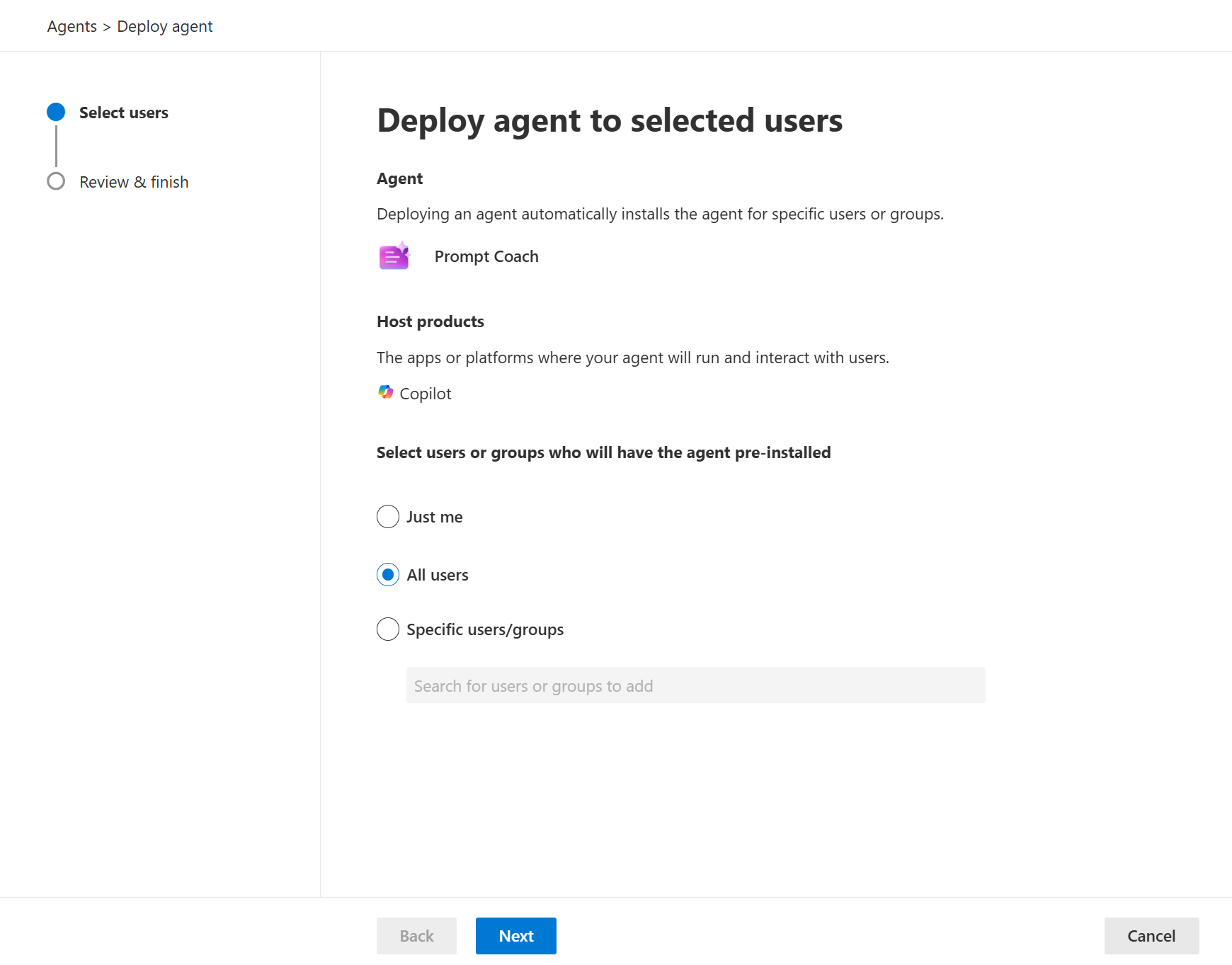

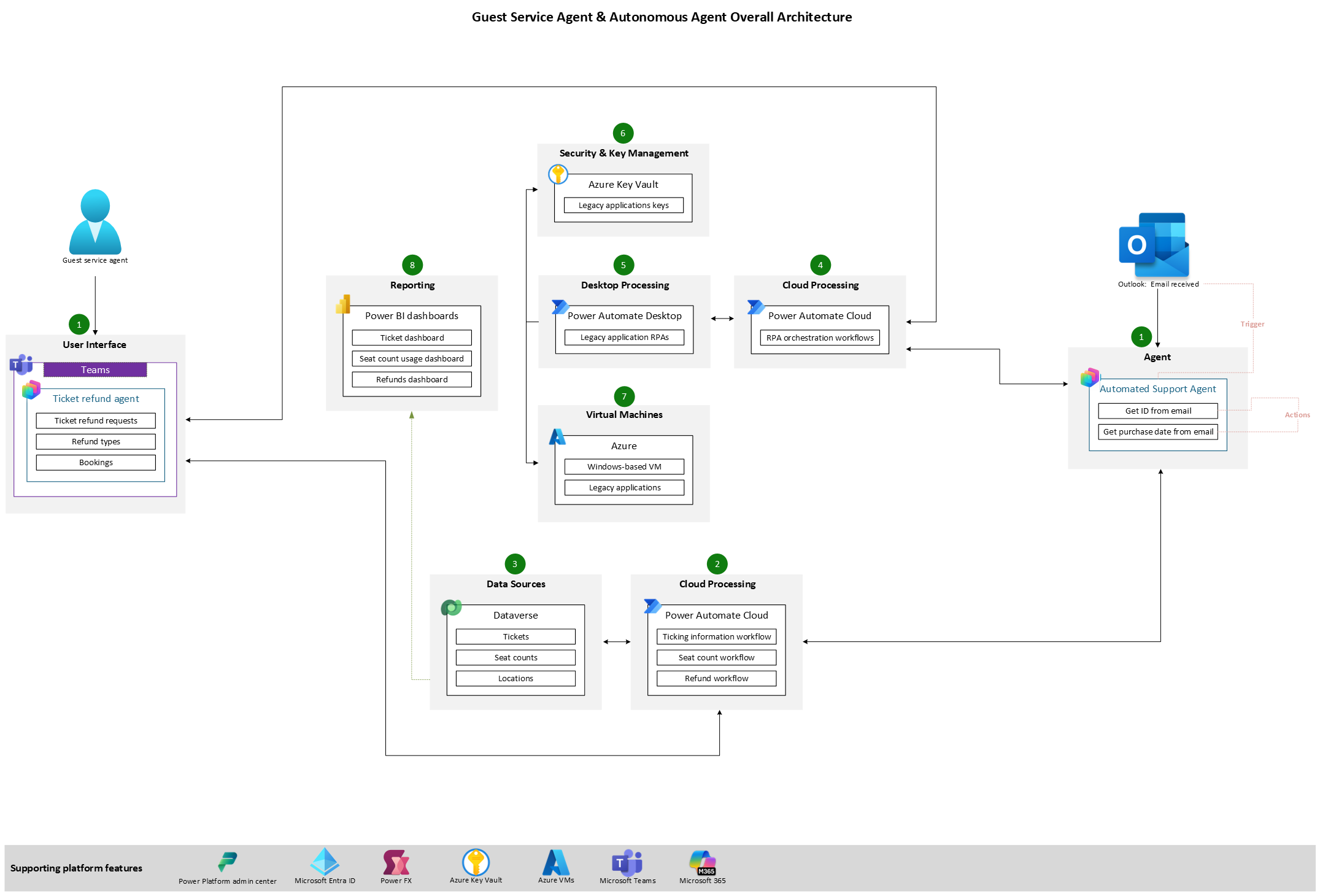

Once a request passes intake, the next workflow should focus on access, risk, and ownership. This is where Copilot access management, agent access control, and AI risk reviews become central governance questions rather than optional extras.

This workflow should define who reviews data exposure, who signs off on the use case, what systems the agent can connect to, what actions it can take, and who owns the agent after launch.

If ownership stays vague, governance turns into a series of scattered approvals rather than a working operating model.

This is where fusion team governance becomes real. Security, IT, business stakeholders, makers, and operations all have different responsibilities, and the workflow should make those handoffs clear. If nobody can say who owns an agent, what information it can reach, and what controls apply, then the organization does not yet have a functioning agent governance model.

A third workflow every Agent PMO should standardize is agent lifecycle management. Governance cannot stop at approval. It has to continue through build, testing, release, change management, and retirement.

Organizations need a standard process for testing, deployment, versioning, updates, rollback, and retirement. This matters even more when agents span multiple environments, rely on connectors, or trigger downstream workflows.

A release workflow should also support cross-environment tracking so teams know what is in development, what is in production, and what is being retired or replaced.

Without this workflow, teams often end up with disconnected pilots and fragile handoffs. With it, the Agent PMO can bring structure to how agents move through environments and how operational risk is reduced before users ever see a production experience.

Once agents are live, governance needs a workflow for monitoring what is actually happening. Monitoring should not be treated as just a support task. It is a governance function.

This workflow should standardize how runs are reviewed, how incidents are escalated, who investigates poor outcomes, what thresholds require intervention, and when an agent should be paused or changed.

It should also clarify how transcripts, metrics, and user signals feed back into governance decisions.

This workflow becomes even more important as agents become more capable and more distributed across teams. When there is no consistent issue response path, organizations may have controls on paper but very little operational resilience in practice.

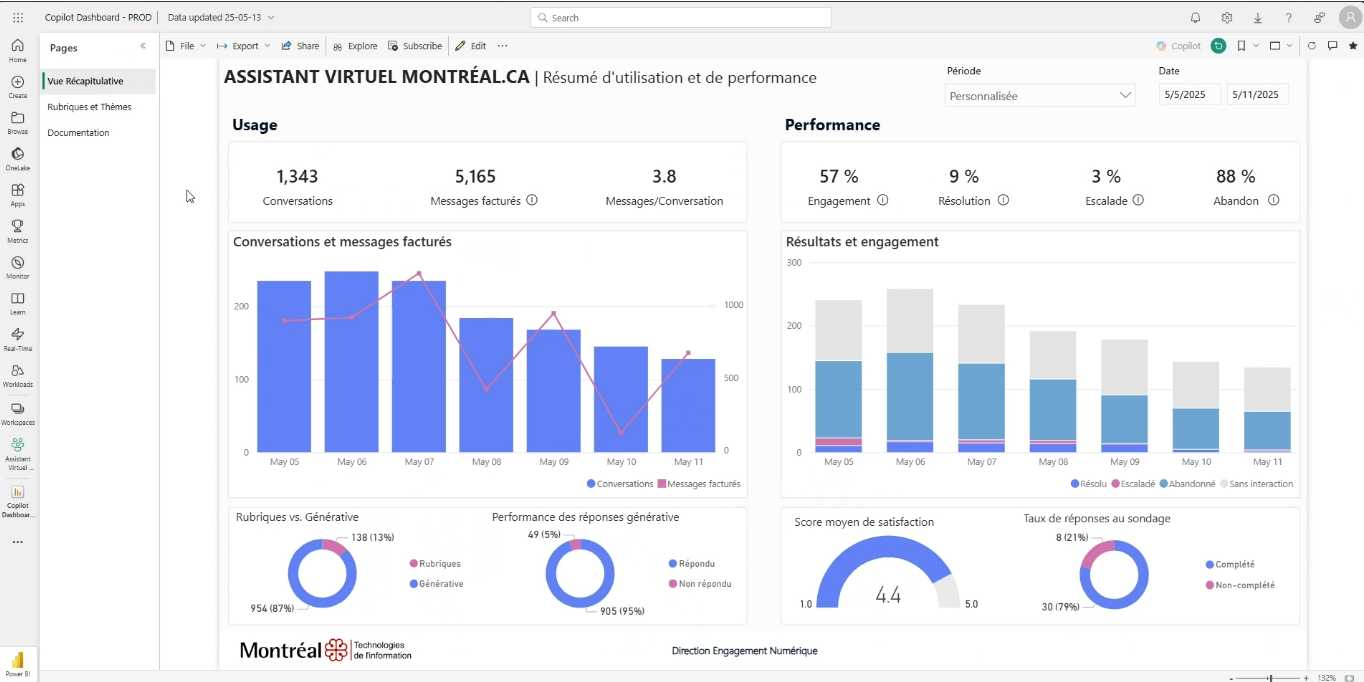

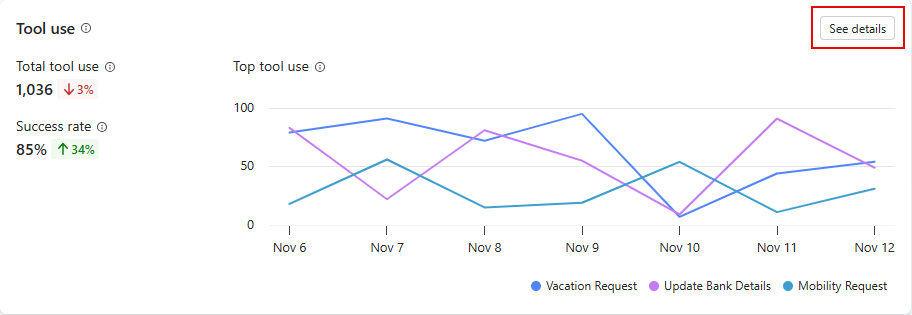

The fifth workflow is measurement. Reporting, KPI tracking, and performance insight should be treated as part of governance rather than something separate from it.

A standardized measurement workflow should define the AI success metrics that matter for each use case. Depending on the scenario, that might include adoption, resolution rate, escalation rate, answer quality, user satisfaction, time saved, or task automation outcomes.

The important thing is that each agent has a clear purpose and a clear way to assess whether it is delivering on that purpose.

This workflow also helps leadership make better portfolio decisions. When the Agent PMO can show what is delivering value, what is underperforming, and where risk is rising, governance becomes more credible and more useful. That is how a Microsoft Copilot governance framework becomes an operating system for decision-making rather than a set of disconnected controls.

Each workflow matters on its own, but the real value comes from standardizing them together. Intake helps decide what should move forward. Risk and access review helps determine what controls apply. Lifecycle management creates structure for change. Monitoring helps detect issues early. Measurement shows whether the work is actually paying off.

Together, those workflows create a practical agent operating model and a repeatable governance operating model for AI.

This is also what separates ad hoc experimentation from an actual Agent PMO. A PMO is not just a meeting. It is the operating layer that standardizes these workflows so the business can scale agents responsibly, support Power Automate governance where workflows intersect, and maintain clear accountability as demand grows.

Our Agent PMO event explored what it takes to move from governance theory to practical execution, including how organizations can standardize workflows for intake, risk review, lifecycle management, and measurement.

Join Our Newsletter