Organizations often approach Copilot governance by focusing on controls first. They look at permissions, compliance, data access, and admin settings. Those things matter, but on their own, they do not create a governance model that can scale. Microsoft’s Copilot Control System is built around more than security alone. It also includes management controls and measurement, showing that governance must be operational, not just technical.

As usage expands, that gap becomes harder to ignore. Teams begin experimenting with copilots, custom agents, connected workflows, and new use cases faster than governance models can keep up. That is where AI agent governance starts to fail. The issue is not just that organizations lack controls. It is that many of them do not have a clear agent governance model, a shared ownership structure, or a practical governance operating model for AI that connects policy to execution. Without that foundation, governance becomes reactive, fragmented, and difficult to scale.

That is why governance for AI agents fails without an agent operating model. If organizations want governance that can keep up with innovation, they need more than isolated controls. They need a way to define ownership, assess risk, manage agent lifecycle decisions, and measure value over time.

Many organizations assume that once the right controls are in place, governance is covered. In practice, that is where problems begin. A governance tool can help enforce policy, but it cannot decide who owns approvals, who reviews risk, who manages rollout, or who is responsible for outcomes. A true Microsoft Copilot governance framework needs operating discipline behind it. Otherwise, governance stays theoretical while real-world deployment moves ahead without enough structure.

That is why governance execution matters just as much as governance design. Organizations need a lightweight governance framework that can support innovation without losing visibility or control. It should help manage Copilot risk and compliance, while also creating a repeatable process for approvals, exceptions, measurement, and oversight. Without that kind of model, governance tends to become either too slow to support the business or too loose to manage risk effectively.

Even strong technical controls can fail when they are not supported by a working operating model. New agents appear without clear review paths. Business teams move ahead with local priorities. Security and IT get pulled in later, once risks are already emerging. The result is confusion around ownership, limited visibility into what is live, and no consistent way to scale AI governance at scale across departments or environments.

One of the biggest reasons Copilot governance breaks down is unclear ownership. In many organizations, responsibility is divided across IT, security, business teams, makers, and operations, but nobody owns the full lifecycle. One team may manage deployment, another may handle compliance, and another may be expected to support the experience once it is live. That fragmented structure makes it difficult to govern consistently because no single group is accountable for how copilots and agents are approved, monitored, improved, or retired.

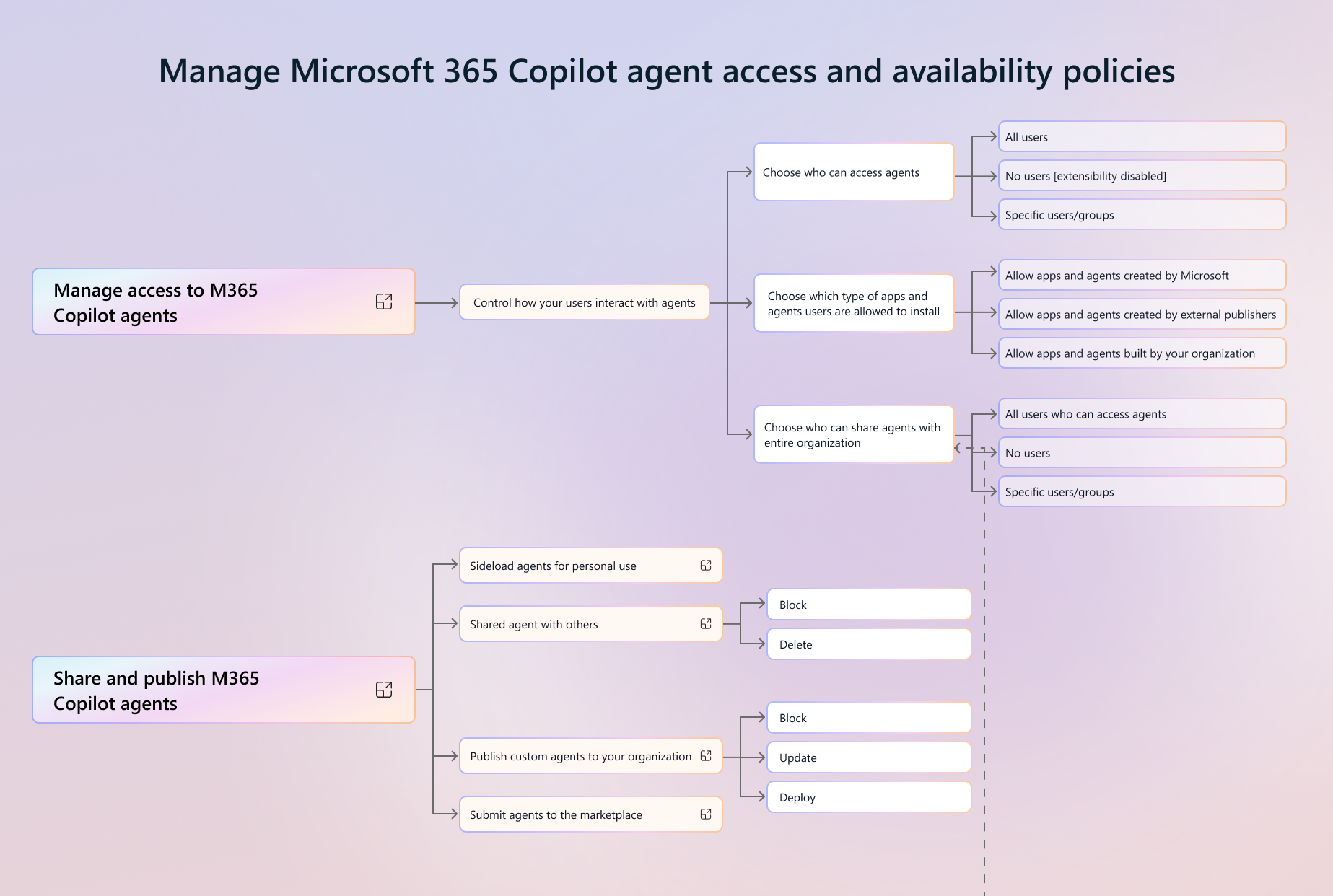

This is where fusion team governance becomes critical. Copilot and agents do not sit neatly inside one function. They cut across business priorities, technical design, security requirements, process change, and adoption. That means governance needs to reflect a cross-functional model, not a siloed one. A strong agent governance model should clearly define who can request a new agent, who reviews it, who approves it, who supports it, and who owns the business outcome.

An effective agent operating model should also define agent access control, Copilot access management, and the process for conducting AI risk reviews before broader deployment. Those responsibilities cannot be assumed or handled informally. If ownership stays vague, governance becomes inconsistent, and what starts as a controlled rollout quickly turns into sprawl.

A major weakness in many governance efforts is that they focus too heavily on launch and not enough on what happens after. But agents are not static tools. Their behavior, value, and risk profile can change over time depending on prompts, permissions, connected data, workflow logic, and how people actually use them. That is why AI agent governance must include agent lifecycle management from the start, rather than treating governance as a one-time approval step.

A mature operating model needs clear AI governance workflows for intake, review, testing, rollout, monitoring, escalation, and retirement. Those workflows should answer practical questions: What qualifies an agent for production use? Who reviews changes after deployment? What happens when an agent underperforms or creates risk? How is performance tracked across use cases and business units? Without those answers, governance becomes patchy and teams end up improvising decisions that should have been standardized.

This is also where a more formal AI PMO model can help. Whether organizations call it an Agent PMO or something else, the point is the same: someone needs to coordinate governance across initiatives, ensure standards are being followed, and provide visibility across environments. In larger organizations, cross-environment tracking becomes especially important, since leaders need to know where agents are deployed, what systems they can access, and whether they are delivering measurable value.

A strong agent operating model is not just about reducing risk. It is also what helps organizations scale responsibly while showing clear business value. Governance works best when it supports the business, not when it simply blocks progress. That means organizations need a model that connects use case prioritization, approvals, delivery standards, support expectations, and measurement into one system that can be applied consistently.

This is where an Agent PMO approach becomes valuable. It creates structure around how agents are proposed, reviewed, deployed, and evaluated. It can also function as a practical AI PMO model for coordinating work across stakeholders, setting standards, and ensuring that governance is tied to delivery instead of sitting on the sidelines. The right model makes it easier to align technical controls with business outcomes, while still keeping pace with demand for experimentation and scale.

In practice, that means organizations need more than a policy deck. They need a model that can define decision rights, establish standards, support AI success metrics, and create visibility into whether governance is actually working. When that structure is in place, governance becomes an enabler. When it is missing, teams are left with disconnected pilots, unclear ownership, and very little evidence of value.

The core problem is that many organizations still approach governance as if a policy alone will solve it. But policies do not manage intake, they do not assign ownership, and they do not create operational follow-through. What organizations actually need is a governance model that can support real deployment. The goal should be governance without slowing innovation, not governance that creates friction every time a team wants to move forward with a useful idea.

That model should account for how copilots, agents, automations, and supporting workflows interact. In some cases, that also means aligning agent governance with related disciplines such as Power Automate governance, especially when agents trigger workflows, approvals, or process changes behind the scenes. Governance cannot stay isolated if the technology itself is interconnected. It has to reflect how work is actually happening across the organization.

In the end, the difference between stalled experimentation and scalable transformation is not whether an organization has a few controls in place. It is whether it has an operating model that supports decision-making, accountability, lifecycle oversight, and measurable value. That is what turns governance from a loose collection of policies into something the business can actually rely on.

If you are thinking through how to make Copilot governance practical across the business, this is the conversation to join.

Join Our Newsletter