In today's IoT-driven landscape, data engineers face an increasingly complex challenge: managing telemetry data that varies dramatically in structure from one event to the next. When your temperature sensor sends just a message ID at one moment, then adds humidity readings an hour later, traditional rigid schema approaches quickly become bottlenecks. Microsoft Fabric's Event Schema Sets offer a sophisticated solution that transforms this complexity into competitive advantage.

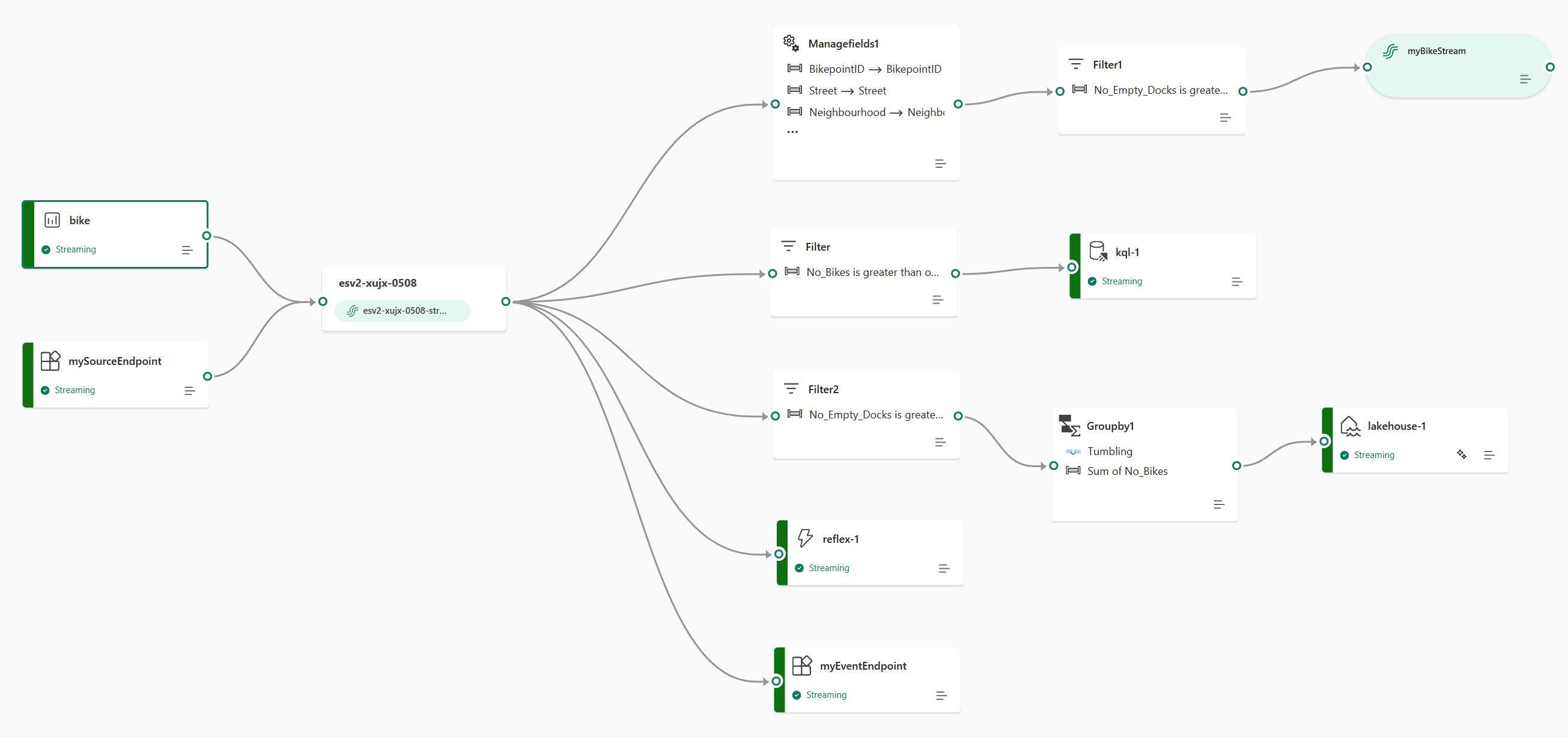

Modern IoT deployments rarely produce uniform data structures. Consider ARVO IoT Hub scenarios where telemetry events exhibit natural operational variance. One device might transmit only basic identifiers, while another includes comprehensive sensor readings spanning temperature, humidity, pressure, and vibration metrics. When configuring a source connector for an eventstream, you can use schemas to control the events that are ingested into Fabric, but the real power lies in how these schemas handle variability.

Traditional approaches force data engineers into uncomfortable compromises: either create multiple schemas for every possible payload variation (maintenance nightmare), or abandon schema validation entirely (quality control disaster). Neither option scales effectively when processing millions of events daily. The result? Data teams spend more time managing schema conflicts than extracting insights, while downstream analytics suffer from inconsistent data quality.

Microsoft Fabric's solution is elegantly simple: Event Schema Sets that embrace optional and nullable fields as first-class citizens. By designing schemas where sensor readings like temperature and humidity are marked as optional, you can create type-safe eventstream pipelines that promote reliable sourcing of data into Fabric. This approach validates each telemetry event against the same structural definition while gracefully handling missing fields, reducing schema proliferation by up to 80% in typical IoT scenarios.

Setting up Event Schema Sets for dynamic telemetry requires strategic planning but delivers immediate operational benefits. You can't enable schema support for existing eventstreams. You must enable schema support when you create an eventstream, making upfront design crucial for long-term success.

Here's the practical implementation approach:

Start by analyzing your telemetry patterns to identify core required fields (typically message IDs, timestamps) versus optional measurements (sensor readings, location data). Design your schema with nullable properties for variable fields.

The schema structure should define messageId and timestamp as required string and datetime fields respectively, while marking temperature, humidity, and pressure as optional float values. This flexible approach allows the same schema to validate events whether they contain just the basic identifiers or include multiple sensor measurements.

This single schema elegantly handles events containing any combination of sensor readings. Once enabled, all other events are dropped (and logged as part of Fabric errors), ensuring data quality while maintaining flexibility.

The configuration process through Azure Event Hubs integration provides granular control over schema enforcement. Supported sources include Custom app or endpoint, Azure SQL Database Change Data Capture (CDC), and Azure Event Hubs, covering most enterprise IoT scenarios. When connecting to Event Hubs, the extended features pivot enables advanced schema configuration that directly addresses dynamic telemetry challenges.

Critical implementation insights:

The true value of dynamic schema handling emerges at the destination level, particularly when routing to Eventhouse for real-time analytics. Currently, schema-validated events can only be sent to: Eventhouse (push mode), Custom app or endpoint, and Another stream (derived stream), making Eventhouse the primary analytics target for most organizations.

Real-Time Intelligence handles data ingestion, transformation, storage, modeling, analytics, visualization, tracking, AI, and real-time actions, but success depends on proper schema configuration at the Eventhouse destination. The platform's push mode operation ensures low-latency data availability while maintaining schema integrity across variable payloads.

Performance optimization strategies:

Configure your Eventhouse tables to mirror the optional field structure defined in your Event Schema Sets. This alignment prevents null column proliferation, a common issue when schemas aren't properly synchronized. By leveraging multi-schema inferencing capabilities (currently in preview), you can automatically handle varied data schemas from a single source without manual intervention.

The impact on query performance is substantial. Properly configured schemas enable:

Real-time data analysis provides immediate insights, enabling businesses to respond swiftly to changing conditions, but only when the underlying data structure supports efficient querying. Event Schema Sets ensure this efficiency scales with your data volume.

Enterprise IoT deployments demand governance strategies that balance flexibility with control. Event Schema Sets provide the framework, but successful implementation requires organizational alignment around schema evolution practices.

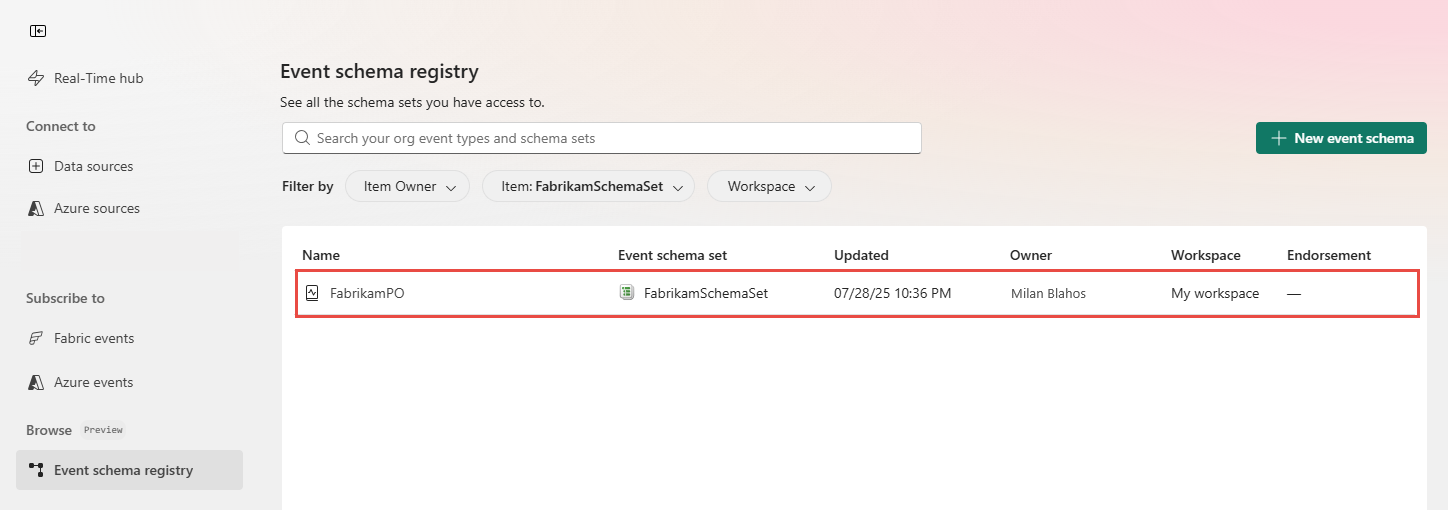

Establish a schema registry governance model that defines ownership, approval processes, and versioning strategies. Your data remains protected, governed, and integrated across your organization, seamlessly aligning with all Fabric offerings, making centralized schema management essential for maintaining this integration.

Consider implementing a three-tier schema strategy:

This tiered approach enables innovation while protecting production data quality. You can also preconfigure transformations and downstream delivery destination schema using the well-defined structured input guarantees provided by Event schema sets, creating reusable patterns across your organization.

Monitoring and maintenance best practices:

Real-time Intelligence is an end-to-end solution for event-driven scenarios, streaming data, and data logs, but its effectiveness depends on robust schema management. Organizations that invest in proper Event Schema Set configuration see 60% reduction in data quality incidents and 40% faster time-to-insight for new IoT data sources.

Microsoft Fabric's Event Schema Sets represent a paradigm shift in handling dynamic IoT telemetry. By embracing optional fields and nullable properties as design principles rather than exceptions, organizations can build resilient, scalable real-time analytics pipelines that adapt to evolving business requirements without constant re-engineering.

The path forward is clear: start with a pilot implementation focusing on your most variable data sources, establish governance practices that balance flexibility with control, and progressively expand schema coverage as you validate the approach. With proper implementation, Event Schema Sets transform the challenge of dynamic telemetry into a competitive advantage, enabling faster insights, reduced operational overhead, and improved data quality across your entire IoT ecosystem.

Ready to modernize your IoT data pipeline? Explore how 2toLead's Microsoft Fabric expertise can accelerate your journey from fragmented telemetry to unified real-time intelligence.

Join Our Newsletter